Physically-based Shading

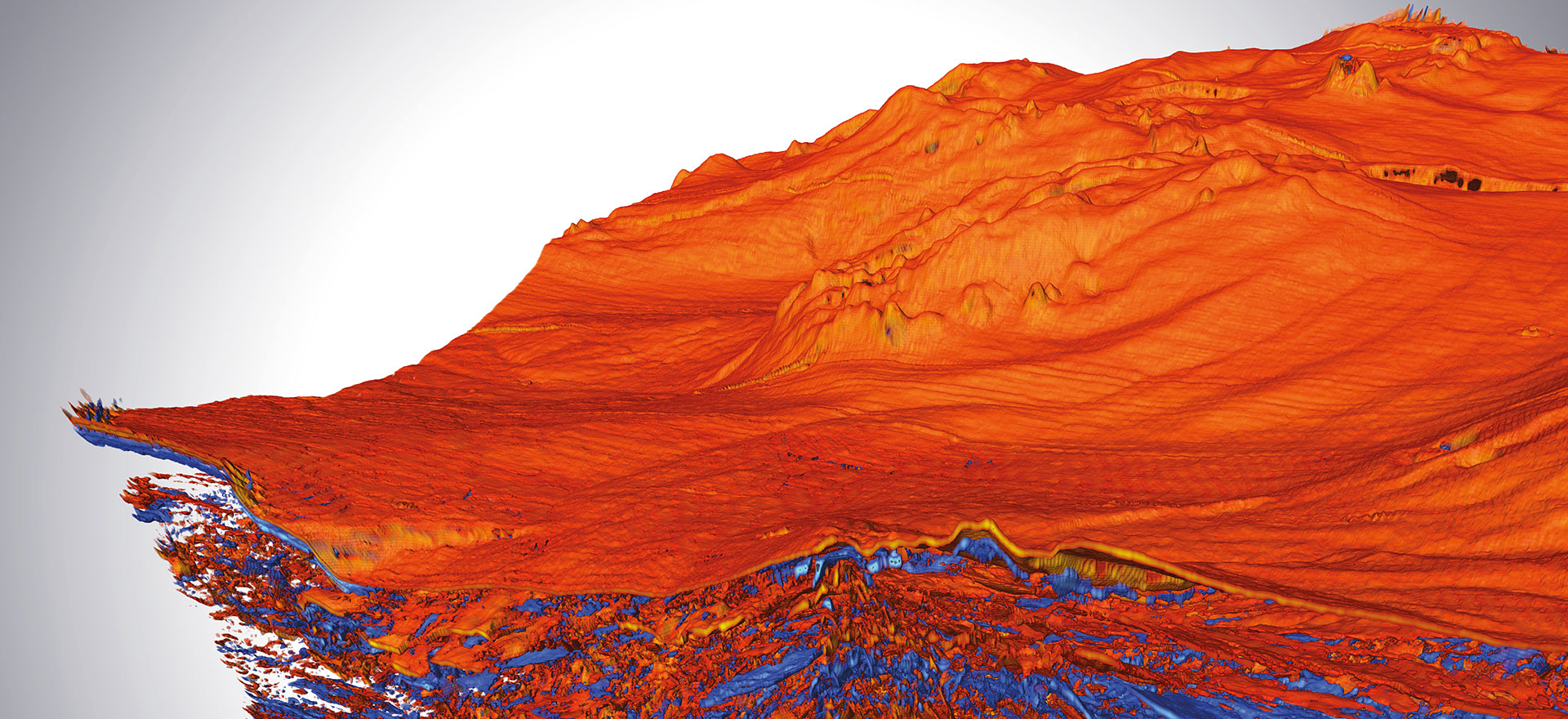

Classical techniques for rendering medical image data in 3D have been around since the 1980s, including multi-planar reformatting (MPR), maximum intensity projection (MIP), and direct volume rendering (DVR) with color and opacity mapping. These techniques are highly useful but based on very simple models of color, lighting, and transparency that do not accurately represent the appearance of materials in the real world.

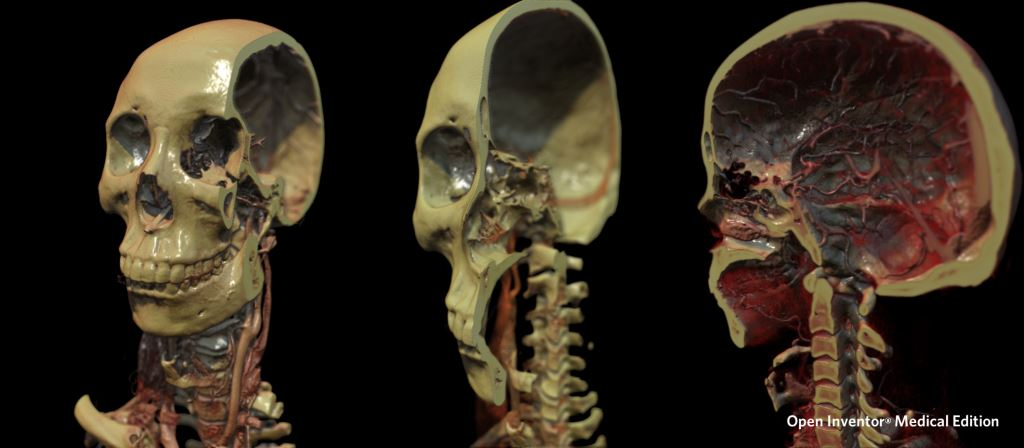

The human visual system is capable of interpreting the shapes and relationships of objects based on very subtle clues resulting from the complex interaction of light with real materials. We can take advantage of this amazing capability using a set of techniques called “physically based shading”. Physically-based shading uses the principles of physics to simulate the interactions between light and materials in a realistic way. Related to so-called cinematic rendering, these techniques are based on algorithms developed in the gaming and entertainment industries to produce life-like images. The algorithms are well known but generally were much too slow for interactive use until the massive computing power of graphics processing units (GPU) arrived.

Integrating physically based shading with volume rendering introduces a new generation of medical visualization. Physically more accurate presentation of medical volume data can have tremendous benefits for medical imaging tasks including surgery planning, patient communication, and education. For example, in addition to planning the actual surgery, more realistic images of the body can be tremendously helpful when explaining complex procedures to patients who are not trained to read CT and MRI images.

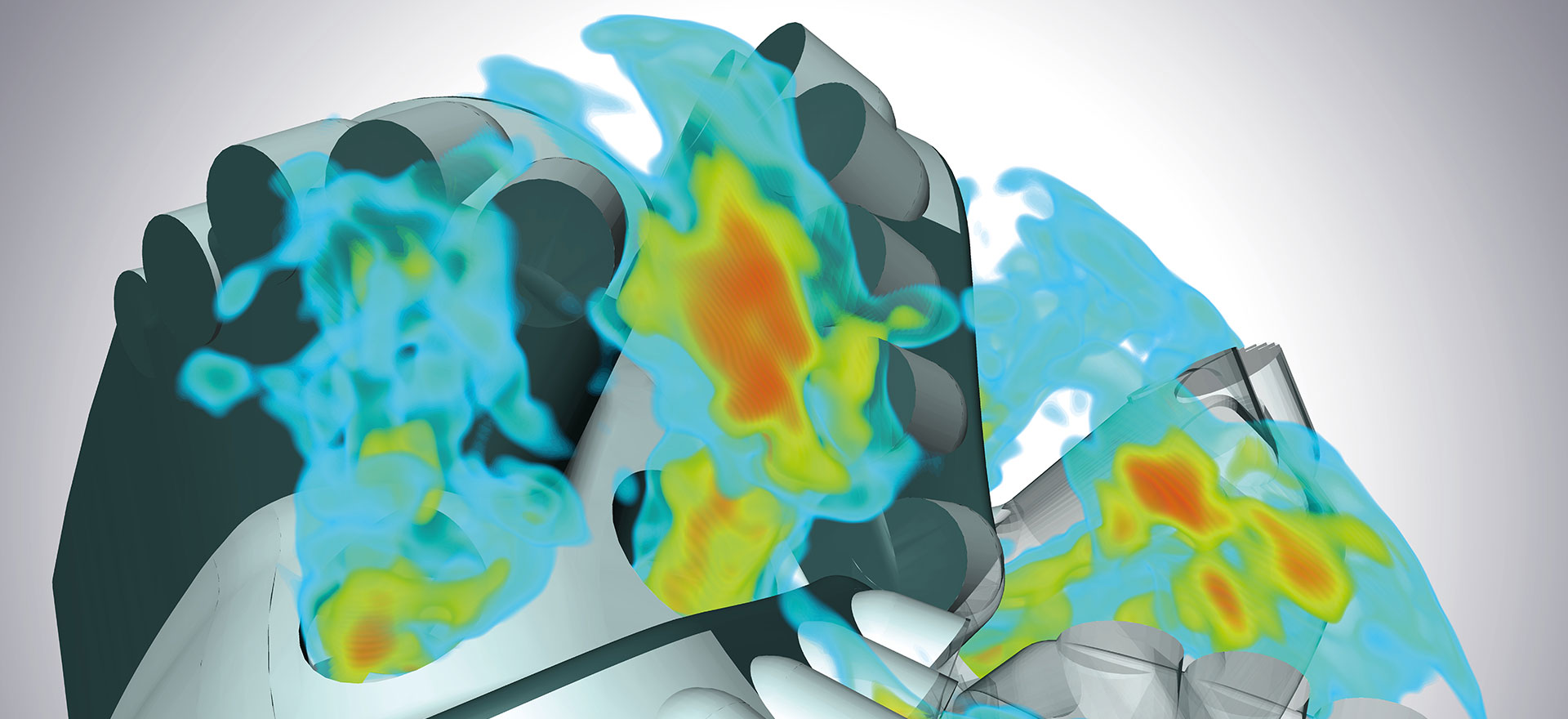

Physically-based shading combines a variety of GPU accelerated techniques including image-based lighting, complex surface reflection modeling, ray-traced shadow casting, ambient occlusion, high dynamic range, and depth of field. These techniques can be used interactively on typical desktop machines with standard graphics hardware.